rustc: Handle #[inline(always)] at -O0

This commit updates the handling of `#[inline(always)]` functions at -O0 to

ensure that it's always inlined regardless of the number of codegen units used.

Closes#45201

This commit updates the handling of `#[inline(always)]` functions at -O0 to

ensure that it's always inlined regardless of the number of codegen units used.

Closes#45201

Fix data-layout field in x86_64-unknown-linux-gnu.json test file

The current data-layout causes the following error:

> rustc: /checkout/src/llvm/lib/CodeGen/MachineFunction.cpp:151: void llvm::MachineFunction::init(): Assertion `Target.isCompatibleDataLayout(getDataLayout()) && "Can't create a MachineFunction using a Module with a " "Target-incompatible DataLayout attached\n"' failed.

The new value was generated according to [this comment by @japaric](https://github.com/rust-lang/rust/issues/31367#issuecomment-213595571).

This commit tweaks the behavior of inlining functions into multiple codegen

units when rustc is compiling in debug mode. Today rustc will unconditionally

treat `#[inline]` functions by translating them into all codegen units that

they're needed within, marking the linkage as `internal`. This commit changes

the behavior so that in debug mode (compiling at `-O0`) rustc will instead only

translate `#[inline]` functions into *one* codegen unit, forcing all other

codegen units to reference this one copy.

The goal here is to improve debug compile times by reducing the amount of

translation that happens on behalf of multiple codegen units. It was discovered

in #44941 that increasing the number of codegen units had the adverse side

effect of increasing the overal work done by the compiler, and the suspicion

here was that the compiler was inlining, translating, and codegen'ing more

functions with more codegen units (for example `String` would be basically

inlined into all codegen units if used). The strategy in this commit should

reduce the cost of `#[inline]` functions to being equivalent to one codegen

unit, which is only translating and codegen'ing inline functions once.

Collected [data] shows that this does indeed improve the situation from [before]

as the overall cpu-clock time increases at a much slower rate and when pinned to

one core rustc does not consume significantly more wall clock time than with one

codegen unit.

One caveat of this commit is that the symbol names for inlined functions that

are only translated once needed some slight tweaking. These inline functions

could be translated into multiple crates and we need to make sure the symbols

don't collideA so the crate name/disambiguator is mixed in to the symbol name

hash in these situations.

[data]: https://github.com/rust-lang/rust/issues/44941#issuecomment-334880911

[before]: https://github.com/rust-lang/rust/issues/44941#issuecomment-334583384

This commit is an implementation of LLVM's ThinLTO for consumption in rustc

itself. Currently today LTO works by merging all relevant LLVM modules into one

and then running optimization passes. "Thin" LTO operates differently by having

more sharded work and allowing parallelism opportunities between optimizing

codegen units. Further down the road Thin LTO also allows *incremental* LTO

which should enable even faster release builds without compromising on the

performance we have today.

This commit uses a `-Z thinlto` flag to gate whether ThinLTO is enabled. It then

also implements two forms of ThinLTO:

* In one mode we'll *only* perform ThinLTO over the codegen units produced in a

single compilation. That is, we won't load upstream rlibs, but we'll instead

just perform ThinLTO amongst all codegen units produced by the compiler for

the local crate. This is intended to emulate a desired end point where we have

codegen units turned on by default for all crates and ThinLTO allows us to do

this without performance loss.

* In anther mode, like full LTO today, we'll optimize all upstream dependencies

in "thin" mode. Unlike today, however, this LTO step is fully parallelized so

should finish much more quickly.

There's a good bit of comments about what the implementation is doing and where

it came from, but the tl;dr; is that currently most of the support here is

copied from upstream LLVM. This code duplication is done for a number of

reasons:

* Controlling parallelism means we can use the existing jobserver support to

avoid overloading machines.

* We will likely want a slightly different form of incremental caching which

integrates with our own incremental strategy, but this is yet to be

determined.

* This buys us some flexibility about when/where we run ThinLTO, as well as

having it tailored to fit our needs for the time being.

* Finally this allows us to reuse some artifacts such as our `TargetMachine`

creation, where all our options we used today aren't necessarily supported by

upstream LLVM yet.

My hope is that we can get some experience with this copy/paste in tree and then

eventually upstream some work to LLVM itself to avoid the duplication while

still ensuring our needs are met. Otherwise I fear that maintaining these

bindings may be quite costly over the years with LLVM updates!

Fix native main() signature on 64bit

Hello,

in LLVM-IR produced by rustc on x86_64-linux-gnu, the native main() function had incorrect types for the function result and argc parameter: i64, while it should be i32 (really c_int). See also #20064, #29633.

So I've attempted a fix here. I tested it by checking the LLVM IR produced with --target x86_64-unknown-linux-gnu and i686-unknown-linux-gnu. Also I tried running the tests (`./x.py test`), however I'm getting two failures with and without the patch, which I'm guessing is unrelated.

This commit changes the default of rustc to use 32 codegen units when compiling

in debug mode, typically an opt-level=0 compilation. Since their inception

codegen units have matured quite a bit, gaining features such as:

* Parallel translation and codegen enabling codegen units to get worked on even

more quickly.

* Deterministic and reliable partitioning through the same infrastructure as

incremental compilation.

* Global rate limiting through the `jobserver` crate to avoid overloading the

system.

The largest benefit of codegen units has forever been faster compilation through

parallel processing of modules on the LLVM side of things, using all the cores

available on build machines that typically have many available. Some downsides

have been fixed through the features above, but the major downside remaining is

that using codegen units reduces opportunities for inlining and optimization.

This, however, doesn't matter much during debug builds!

In this commit the default number of codegen units for debug builds has been

raised from 1 to 32. This should enable most `cargo build` compiles that are

bottlenecked on translation and/or code generation to immediately see speedups

through parallelization on available cores.

Work is being done to *always* enable multiple codegen units (and therefore

parallel codegen) but it requires #44841 at least to be landed and stabilized,

but stay tuned if you're interested in that aspect!

This commit removes the `dep_graph` field from the `Session` type according to

issue #44390. Most of the fallout here was relatively straightforward and the

`prepare_session_directory` function was rejiggered a bit to reuse the results

in the later-called `load_dep_graph` function.

Closes#44390

Add `TargetOptions::min_global_align`, with s390x at 16-bit

The SystemZ `LALR` instruction provides PC-relative addressing for globals,

but only to *even* addresses, so other compilers make sure that such

globals are always 2-byte aligned. In Clang, this is modeled with

`TargetInfo::MinGlobalAlign`, and `TargetOptions::min_global_align` now

serves the same purpose for rustc.

In Clang, the only targets that set this are SystemZ, Lanai, and NVPTX, and

the latter two don't have targets in rust master.

Fixes#44411.

r? @eddyb

Fix sanitizer tests on buggy kernels

Travis recently pushed an update to the Linux environments, namely the kernels

that we're running on. This in turn caused some of the sanitizer tests we run to

fail. We also apparently weren't the first to hit these failures! Detailed in

google/sanitizers#837 these tests were failing due to a specific commit in the

kernel which has since been backed out, but for now work around the buggy kernel

that's deployed on Travis and eventually we should be able to remove these

flags.

Travis recently pushed an update to the Linux environments, namely the kernels

that we're running on. This in turn caused some of the sanitizer tests we run to

fail. We also apparently weren't the first to hit these failures! Detailed in

google/sanitizers#837 these tests were failing due to a specific commit in the

kernel which has since been backed out, but for now work around the buggy kernel

that's deployed on Travis and eventually we should be able to remove these

flags.

This commit adds logic to the compiler to attempt to handle super long linker

invocations by falling back to the `@`-file syntax if the invoked command is too

large. Each OS has a limit on how many arguments and how large the arguments can

be when spawning a new process, and linkers tend to be one of those programs

that can hit the limit!

The logic implemented here is to unconditionally attempt to spawn a linker and

then if it fails to spawn with an error from the OS that indicates the command

line is too big we attempt a fallback. The fallback is roughly the same for all

linkers where an argument pointing to a file, prepended with `@`, is passed.

This file then contains all the various arguments that we want to pass to the

linker.

Closes#41190

These fixes all have to do with the 64-bit PowerPC ELF ABI for big-endian

targets. The ELF v2 ABI for powerpc64le already worked well.

- Return after marking return aggregates indirect. Fixes#42757.

- Pass one-member float aggregates as direct argument values.

- Aggregate arguments less than 64-bit must be written in the least-

significant bits of the parameter space.

- Larger aggregates are instead padded at the tail.

(i.e. filling MSBs, padding the remaining LSBs.)

New tests were also added for the single-float aggregate, and a 3-byte

aggregate to check that it's filled into LSBs. Overall, at least these

formerly-failing tests now pass on powerpc64:

- run-make/extern-fn-struct-passing-abi

- run-make/extern-fn-with-packed-struct

- run-pass/extern-pass-TwoU16s.rs

- run-pass/extern-pass-TwoU8s.rs

- run-pass/struct-return.rs

This is a follow-up to f189d7a693 and 9d11b089ad. While `-z ignore`

is what needs to be passed to the Solaris linker, because gcc is used as

the default linker, both that form and `-Wl,-z -Wl,ignore` (including

extra double quotes) need to be taken into account, which explains the

more complex regular expression.

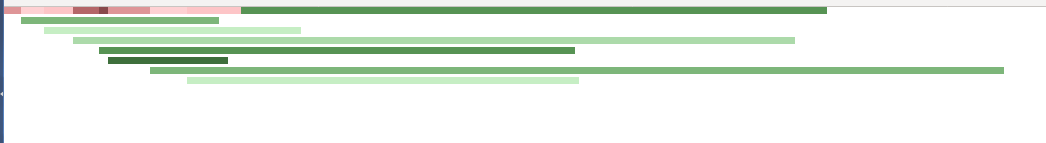

Run translation and LLVM in parallel when compiling with multiple CGUs

This is still a work in progress but the bulk of the implementation is done, so I thought it would be good to get it in front of more eyes.

This PR makes the compiler start running LLVM while translation is still in progress, effectively allowing for more parallelism towards the end of the compilation pipeline. It also allows the main thread to switch between either translation or running LLVM, which allows to reduce peak memory usage since not all LLVM module have to be kept in memory until linking. This is especially good for incr. comp. but it works just as well when running with `-Ccodegen-units=N`.

In order to help tuning and debugging the work scheduler, the PR adds the `-Ztrans-time-graph` flag which spits out html files that show how work packages where scheduled:

(red is translation, green is llvm)

One side effect here is that `-Ztime-passes` might show something not quite correct because trans and LLVM are not strictly separated anymore. I plan to have some special handling there that will try to produce useful output.

One open question is how to determine whether the trans-thread should switch to intermediate LLVM processing.

TODO:

- [x] Restore `-Z time-passes` output for LLVM.

- [x] Update documentation, esp. for work package scheduling.

- [x] Tune the scheduling algorithm.

cc @alexcrichton @rust-lang/compiler

The function should accept feature strings that old LLVM might not

support.

Simplify the code using the same approach used by

LLVMRustPrintTargetFeatures.

Dummify the function for non 4.0 LLVM and update the tests accordingly.

Add support for dylibs with Address Sanitizer

Many applications use address sanitizer to assert correct behaviour of their programs. When using Rust with C, it's much more important to assert correct programs with tools like asan/lsan due to the unsafe nature of the access across an ffi boundary. However, previously only rust bin types could use asan. This posed a challenge for existing C applications that link or dlopen .so when the C application is compiled with asan.

This PR enables asan to be linked to the dylib and cdylib crate type. We alter the test to check the proc-macro crate does not work with -Z sanitizer=address. Finally, we add a test that compiles a shared object in rust, then another rust program links it and demonstrates a crash through the call to the library.

This PR is nearly complete, but I do require advice on the change to fix the -lasan that currently exists in the dylib test. This is required because the link statement is not being added correctly to the rustc build when -Z sanitizer=address is added (and I'm not 100% sure why)

Thanks,

Note different versions of same crate when absolute paths of different types match.

The current check to address #22750 only works when the paths of the mismatched types relative to the current crate are equal, but this does not always work if one of the types is only included through an indirect dependency. If reexports are involved, the indirectly included path can e.g. [contain private modules](https://github.com/rust-lang/rust/issues/22750#issuecomment-302755516).

This PR takes care of these cases by also comparing the *absolute* path, which is equal if the type hasn't moved in the module hierarchy between versions. A more coarse check would be to compare only the crate names instead of full paths, but that might lead to too many false positives.

Additionally, I believe it would be helpful to show where the differing crates came from, i.e. the information in `rustc::middle::cstore::CrateSource`, but I'm not sure yet how to nicely display all of that, so I'm leaving it to a future PR.

This PR is an implementation of [RFC 1974] which specifies a new method of

defining a global allocator for a program. This obsoletes the old

`#![allocator]` attribute and also removes support for it.

[RFC 1974]: https://github.com/rust-lang/rfcs/pull/197

The new `#[global_allocator]` attribute solves many issues encountered with the

`#![allocator]` attribute such as composition and restrictions on the crate

graph itself. The compiler now has much more control over the ABI of the

allocator and how it's implemented, allowing much more freedom in terms of how

this feature is implemented.

cc #27389

Prior to this PR, when we aborted because a "critical pass" failed, we

displayed the number of errors from that critical pass. While that's the

number of errors that caused compilation to abort in *that place*,

that's not what people really want to know. Instead, always report the

total number of errors, and don't bother to track the number of errors

from the last pass that failed.

This changes the compiler driver API to handle errors more smoothly,

and therefore is a compiler-api-[breaking-change].

Fixes#42793.

A long time coming this commit removes the `flate` crate in favor of the

`flate2` crate on crates.io. The functionality in `flate2` originally flowered

out of `flate` itself and is additionally the namesake for the crate. This will

leave a gap in the naming (there's not `flate` crate), which will likely cause a

particle collapse of some form somewhere.

save-analysis: remove a lot of stuff

This commits us to the JSON format and the more general def/ref style of output, rather than also supporting different data formats for different data structures. This does not affect the RLS at all, but will break any clients of the CSV form - AFAIK there are none (beyond a few of my own toy projects) - DXR stopped working long ago.

r? @eddyb

Build instruction profiler runtime as part of compiler-rt

r? @alexcrichton

This is #38608 with some fixes.

Still missing:

- [x] testing with profiler enabled on some builders (on which ones? Should I add the option to some of the already existing configurations, or create a new configuration?);

- [x] enabling distribution (on which builders?);

- [x] documentation.

Currently rustdoc will fail if passed `-o foo/doc` if the `foo`

directory doesn't exist.

Also remove unneeded `mkdir` as `create_dir_all` can now handle

concurrent invocations.