Document the behaviour of infinite iterators on potentially-computable methods

It’s not entirely clear from the current documentation what behaviour

calling a method such as `min` on an infinite iterator like `RangeFrom`

is. One might expect this to terminate, but in fact, for infinite

iterators, `min` is always nonterminating (at least in the standard

library). This adds a quick note about this behaviour for clarification.

`match`ing on an `Option<Ordering>` seems cause some confusion for LLVM; switching to just using comparison operators removes a few jumps from the simple `for` loops I was trying.

Implement TrustedLen for Take<Repeat> and Take<RangeFrom>

This will allow optimization of simple `repeat(x).take(n).collect()` iterators, which are currently not vectorized and have capacity checks.

This will only support a few aggregates on `Repeat` and `RangeFrom`, which might be enough for simple cases, but doesn't optimize more complex ones. Namely, Cycle, StepBy, Filter, FilterMap, Peekable, SkipWhile, Skip, FlatMap, Fuse and Inspect are not marked `TrustedLen` when the inner iterator is infinite.

Previous discussion can be found in #47082

r? @alexcrichton

Add filtering options to `rustc_on_unimplemented`

- Add filtering options to `rustc_on_unimplemented` for local traits, filtering on `Self` and type arguments.

- Add a way to provide custom notes.

- Tweak binops text.

- Add filter to detect wether `Self` is local or belongs to another crate.

- Add filter to `Iterator` diagnostic for `&str`.

Partly addresses #44755 with a different syntax, as a first approach. Fixes#46216, fixes#37522, CC #34297, #46806.

Because the last item needs special handling, it seems that LLVM has trouble canonicalizing the loops in external iteration. With the override, it becomes obvious that the start==end case exits the loop (as opposed to the one *after* that exiting the loop in external iteration).

It’s not entirely clear from the current documentation what behaviour

calling a method such as `min` on an infinite iterator like `RangeFrom`

is. One might expect this to terminate, but in fact, for infinite

iterators, `min` is always nonterminating (at least in the standard

library). This adds a quick note about this behaviour for clarification.

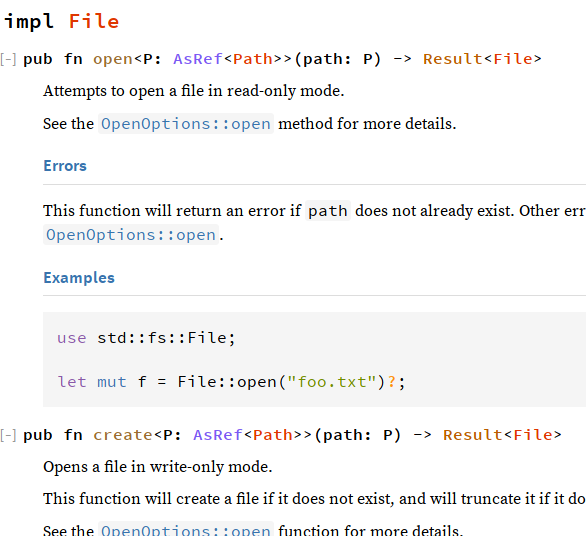

show in docs whether the return type of a function impls Iterator/Read/Write

Closes#25928

This PR makes it so that when rustdoc documents a function, it checks the return type to see whether it implements a handful of specific traits. If so, it will print the impl and any associated types. Rather than doing this via a whitelist within rustdoc, i chose to do this by a new `#[doc]` attribute parameter, so things like `Future` could tap into this if desired.

### Known shortcomings

~~The printing of impls currently uses the `where` class over the whole thing to shrink the font size relative to the function definition itself. Naturally, when the impl has a where clause of its own, it gets shrunken even further:~~ (This is no longer a problem because the design changed and rendered this concern moot.)

The lookup currently just looks at the top-level type, not looking inside things like Result or Option, which renders the spotlights on Read/Write a little less useful:

<details><summary>`File::{open, create}` don't have spotlight info (pic of old design)</summary>

</details>

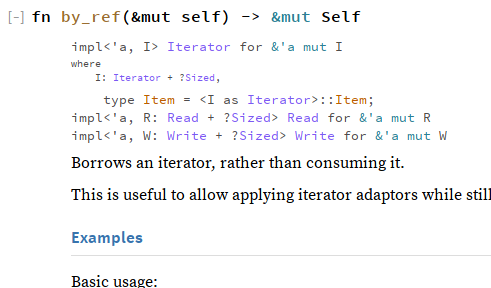

All three of the initially spotlighted traits are generically implemented on `&mut` references. Rustdoc currently treats a `&mut T` reference-to-a-generic as an impl on the reference primitive itself. `&mut Self` counts as a generic in the eyes of rustdoc. All this combines to create this lovely scene on `Iterator::by_ref`:

<details><summary>`Iterator::by_ref` spotlights Iterator, Read, and Write (pic of old design)</summary>

</details>

This is the core method in terms of which the other methods (fold, all, any, find, position, nth, ...) can be implemented, allowing Iterator implementors to get the full goodness of internal iteration by only overriding one method (per direction).

Improve wording for StepBy

No other iterator makes the distinction between an iterator and an iterator adapter

in its summary line, so change it to be consistent with all other adapters.

Add blanket TryFrom impl when From is implemented.

Adds `impl<T, U> TryFrom<T> for U where U: From<T>`.

Removes `impl<'a, T> TryFrom<&'a str> for T where T: FromStr` (originally added in #40281) due to overlapping impls caused by the new blanket impl. This removal is to be discussed further on the tracking issue for TryFrom.

Refs #33417.

/cc @sfackler, @scottmcm (thank you for the help!), and @aturon

Add more custom folding to `core::iter` adaptors

Many of the iterator adaptors will perform faster folds if they forward

to their inner iterator's folds, especially for inner types like `Chain`

which are optimized too. The following types are newly specialized:

| Type | `fold` | `rfold` |

| ----------- | ------ | ------- |

| `Enumerate` | ✓ | ✓ |

| `Filter` | ✓ | ✓ |

| `FilterMap` | ✓ | ✓ |

| `FlatMap` | exists | ✓ |

| `Fuse` | ✓ | ✓ |

| `Inspect` | ✓ | ✓ |

| `Peekable` | ✓ | N/A¹ |

| `Skip` | ✓ | N/A² |

| `SkipWhile` | ✓ | N/A¹ |

¹ not a `DoubleEndedIterator`

² `Skip::next_back` doesn't pull skipped items at all, but this couldn't

be avoided if `Skip::rfold` were to call its inner iterator's `rfold`.

Benchmarks

----------

In the following results, plain `_sum` computes the sum of a million

integers -- note that `sum()` is implemented with `fold()`. The

`_ref_sum` variants do the same on a `by_ref()` iterator, which is

limited to calling `next()` one by one, without specialized `fold`.

The `chain` variants perform the same tests on two iterators chained

together, to show a greater benefit of forwarding `fold` internally.

test iter::bench_enumerate_chain_ref_sum ... bench: 2,216,264 ns/iter (+/- 29,228)

test iter::bench_enumerate_chain_sum ... bench: 922,380 ns/iter (+/- 2,676)

test iter::bench_enumerate_ref_sum ... bench: 476,094 ns/iter (+/- 7,110)

test iter::bench_enumerate_sum ... bench: 476,438 ns/iter (+/- 3,334)

test iter::bench_filter_chain_ref_sum ... bench: 2,266,095 ns/iter (+/- 6,051)

test iter::bench_filter_chain_sum ... bench: 745,594 ns/iter (+/- 2,013)

test iter::bench_filter_ref_sum ... bench: 889,696 ns/iter (+/- 1,188)

test iter::bench_filter_sum ... bench: 667,325 ns/iter (+/- 1,894)

test iter::bench_filter_map_chain_ref_sum ... bench: 2,259,195 ns/iter (+/- 353,440)

test iter::bench_filter_map_chain_sum ... bench: 1,223,280 ns/iter (+/- 1,972)

test iter::bench_filter_map_ref_sum ... bench: 611,607 ns/iter (+/- 2,507)

test iter::bench_filter_map_sum ... bench: 611,610 ns/iter (+/- 472)

test iter::bench_fuse_chain_ref_sum ... bench: 2,246,106 ns/iter (+/- 22,395)

test iter::bench_fuse_chain_sum ... bench: 634,887 ns/iter (+/- 1,341)

test iter::bench_fuse_ref_sum ... bench: 444,816 ns/iter (+/- 1,748)

test iter::bench_fuse_sum ... bench: 316,954 ns/iter (+/- 2,616)

test iter::bench_inspect_chain_ref_sum ... bench: 2,245,431 ns/iter (+/- 21,371)

test iter::bench_inspect_chain_sum ... bench: 631,645 ns/iter (+/- 4,928)

test iter::bench_inspect_ref_sum ... bench: 317,437 ns/iter (+/- 702)

test iter::bench_inspect_sum ... bench: 315,942 ns/iter (+/- 4,320)

test iter::bench_peekable_chain_ref_sum ... bench: 2,243,585 ns/iter (+/- 12,186)

test iter::bench_peekable_chain_sum ... bench: 634,848 ns/iter (+/- 1,712)

test iter::bench_peekable_ref_sum ... bench: 444,808 ns/iter (+/- 480)

test iter::bench_peekable_sum ... bench: 317,133 ns/iter (+/- 3,309)

test iter::bench_skip_chain_ref_sum ... bench: 1,778,734 ns/iter (+/- 2,198)

test iter::bench_skip_chain_sum ... bench: 761,850 ns/iter (+/- 1,645)

test iter::bench_skip_ref_sum ... bench: 478,207 ns/iter (+/- 119,252)

test iter::bench_skip_sum ... bench: 315,614 ns/iter (+/- 3,054)

test iter::bench_skip_while_chain_ref_sum ... bench: 2,486,370 ns/iter (+/- 4,845)

test iter::bench_skip_while_chain_sum ... bench: 633,915 ns/iter (+/- 5,892)

test iter::bench_skip_while_ref_sum ... bench: 666,926 ns/iter (+/- 804)

test iter::bench_skip_while_sum ... bench: 444,405 ns/iter (+/- 571)

Many of the iterator adaptors will perform faster folds if they forward

to their inner iterator's folds, especially for inner types like `Chain`

which are optimized too. The following types are newly specialized:

| Type | `fold` | `rfold` |

| ----------- | ------ | ------- |

| `Enumerate` | ✓ | ✓ |

| `Filter` | ✓ | ✓ |

| `FilterMap` | ✓ | ✓ |

| `FlatMap` | exists | ✓ |

| `Fuse` | ✓ | ✓ |

| `Inspect` | ✓ | ✓ |

| `Peekable` | ✓ | N/A¹ |

| `Skip` | ✓ | N/A² |

| `SkipWhile` | ✓ | N/A¹ |

¹ not a `DoubleEndedIterator`

² `Skip::next_back` doesn't pull skipped items at all, but this couldn't

be avoided if `Skip::rfold` were to call its inner iterator's `rfold`.

Benchmarks

----------

In the following results, plain `_sum` computes the sum of a million

integers -- note that `sum()` is implemented with `fold()`. The

`_ref_sum` variants do the same on a `by_ref()` iterator, which is

limited to calling `next()` one by one, without specialized `fold`.

The `chain` variants perform the same tests on two iterators chained

together, to show a greater benefit of forwarding `fold` internally.

test iter::bench_enumerate_chain_ref_sum ... bench: 2,216,264 ns/iter (+/- 29,228)

test iter::bench_enumerate_chain_sum ... bench: 922,380 ns/iter (+/- 2,676)

test iter::bench_enumerate_ref_sum ... bench: 476,094 ns/iter (+/- 7,110)

test iter::bench_enumerate_sum ... bench: 476,438 ns/iter (+/- 3,334)

test iter::bench_filter_chain_ref_sum ... bench: 2,266,095 ns/iter (+/- 6,051)

test iter::bench_filter_chain_sum ... bench: 745,594 ns/iter (+/- 2,013)

test iter::bench_filter_ref_sum ... bench: 889,696 ns/iter (+/- 1,188)

test iter::bench_filter_sum ... bench: 667,325 ns/iter (+/- 1,894)

test iter::bench_filter_map_chain_ref_sum ... bench: 2,259,195 ns/iter (+/- 353,440)

test iter::bench_filter_map_chain_sum ... bench: 1,223,280 ns/iter (+/- 1,972)

test iter::bench_filter_map_ref_sum ... bench: 611,607 ns/iter (+/- 2,507)

test iter::bench_filter_map_sum ... bench: 611,610 ns/iter (+/- 472)

test iter::bench_fuse_chain_ref_sum ... bench: 2,246,106 ns/iter (+/- 22,395)

test iter::bench_fuse_chain_sum ... bench: 634,887 ns/iter (+/- 1,341)

test iter::bench_fuse_ref_sum ... bench: 444,816 ns/iter (+/- 1,748)

test iter::bench_fuse_sum ... bench: 316,954 ns/iter (+/- 2,616)

test iter::bench_inspect_chain_ref_sum ... bench: 2,245,431 ns/iter (+/- 21,371)

test iter::bench_inspect_chain_sum ... bench: 631,645 ns/iter (+/- 4,928)

test iter::bench_inspect_ref_sum ... bench: 317,437 ns/iter (+/- 702)

test iter::bench_inspect_sum ... bench: 315,942 ns/iter (+/- 4,320)

test iter::bench_peekable_chain_ref_sum ... bench: 2,243,585 ns/iter (+/- 12,186)

test iter::bench_peekable_chain_sum ... bench: 634,848 ns/iter (+/- 1,712)

test iter::bench_peekable_ref_sum ... bench: 444,808 ns/iter (+/- 480)

test iter::bench_peekable_sum ... bench: 317,133 ns/iter (+/- 3,309)

test iter::bench_skip_chain_ref_sum ... bench: 1,778,734 ns/iter (+/- 2,198)

test iter::bench_skip_chain_sum ... bench: 761,850 ns/iter (+/- 1,645)

test iter::bench_skip_ref_sum ... bench: 478,207 ns/iter (+/- 119,252)

test iter::bench_skip_sum ... bench: 315,614 ns/iter (+/- 3,054)

test iter::bench_skip_while_chain_ref_sum ... bench: 2,486,370 ns/iter (+/- 4,845)

test iter::bench_skip_while_chain_sum ... bench: 633,915 ns/iter (+/- 5,892)

test iter::bench_skip_while_ref_sum ... bench: 666,926 ns/iter (+/- 804)

test iter::bench_skip_while_sum ... bench: 444,405 ns/iter (+/- 571)

No other iterator makes the distinction between an iterator and an iterator adapter

in its summary line, so change it to be consistent with all other adapters.

This verifies that TrustedRandomAccess has no side effects when the

iterator item implements Copy. This also implements TrustedLen and

TrustedRandomAccess for str::Bytes.

Add iterator method .rfold(init, function); the reverse of fold

rfold is the reverse version of fold.

Fold allows iterators to implement a different (non-resumable) internal

iteration when it is more efficient than the external iteration implemented

through the next method. (Common examples are VecDeque and .chain()).

Introduce rfold() so that the same customization is available for reverse

iteration. This is achieved by both adding the method, and by having the

Rev\<I> adaptor connect Rev::rfold → I::fold and Rev::fold → I::rfold.

On the surface, rfold(..) is just .rev().fold(..), but the special case

implementations allow a data structure specific fold to be used through for

example .iter().rev(); we thus have gains even for users never calling exactly

rfold themselves.